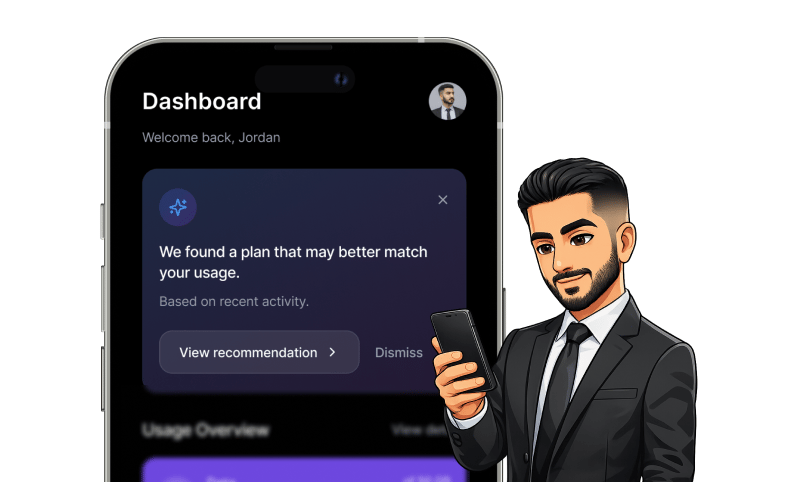

Jordan opens his telco self-care app.

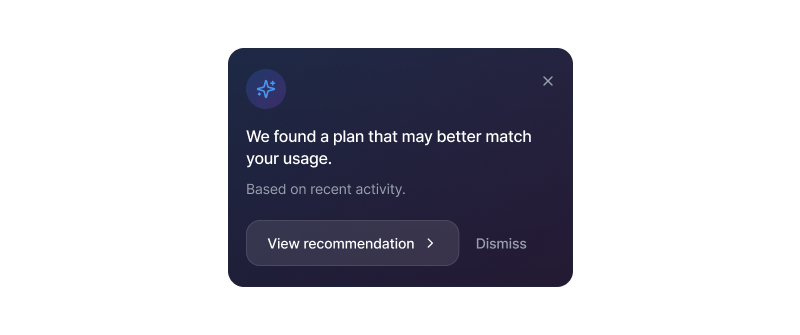

At the top of the dashboard, a soft gradient card appears:

Below it:

View recommendation

Dismiss

Nothing flashy.

No bold savings number.

No urgency.

Just a suggestion.

And that restraint is the design decision.

The UX Tension in AI Recommendation Systems

In intelligent products, the real tension isn’t automation vs manual decision-making.

It’s delegation vs control.

Delegation happens when users allow the system to analyse and recommend on their behalf.

Control exists when users retain the final say even if they ultimately follow the system’s advice.

AI recommendation systems quietly shift this balance.

When suggestion and action are collapsed into one step, systems move from assistance to dominance.

Users may comply. But compliance is not trust.

Why This Telco Self-Care UI Feels Different

The dashboard card does something subtle but important.

It separates recommendation from commitment.

The wording is deliberate:

“May better match” introduces humility

“Based on recent activity” introduces reasoning

“View recommendation” invites exploration

“Dismiss” protects autonomy

The AI has already analysed Jordan’s usage.

It has compared plan structures.

It has identified a potential match.

But it stops short of authority.

That separation preserves user control.

And in high-stakes systems, control is everything.

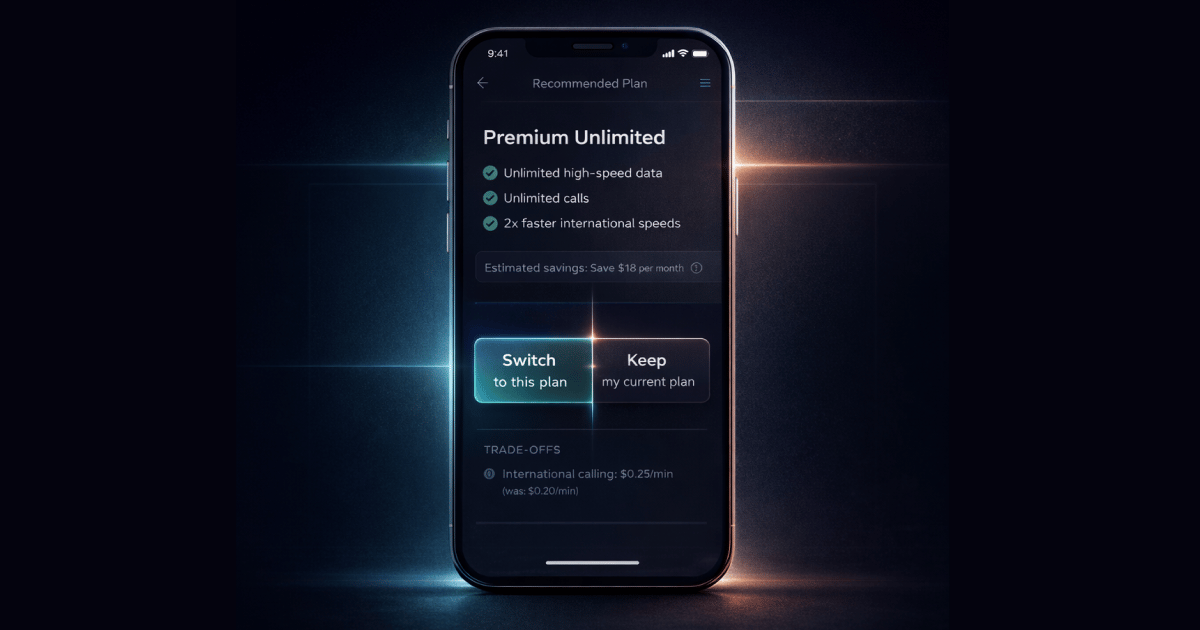

Why AI Plan Recommendations Are Riskier in Telco

AI recommendations behave differently in subscription products compared to e-commerce or content platforms.

In a telco self-care app, changing a plan affects:

Monthly recurring costs

Contract duration

Roaming fees

Billing cycles

This is financial territory.

Users often do not fully understand downstream implications.

That asymmetry increases perceived risk.

When risk is high, users look for signals of control not signals of optimisation.

Without visible override, AI recommendations feel coercive rather than supportive.

What Research Says About AI Trust and User Control

Across industries, research consistently shows:

Users don’t reject AI because it’s inaccurate.

They reject it when it feels uncontrollable.

Key findings include:

75% of users express concern about AI bias or misinformation, especially when decisions appear opaque or final.

Interfaces with clear opt-outs significantly reduce perceived risk and increase trust.

Perceived fairness in AI systems influences user decisions more strongly than fairness in human-to-human interactions.

In other words:

A visible “Dismiss” button is not cosmetic.

It is a trust mechanism.

Human-in-the-Loop Design in Regulated Industries

In finance and insurance, human-in-the-loop (HITL) systems consistently show:

Higher perceived accountability

Reduced automation bias

Improved decision quality

Training users about AI limitations reduces blind acceptance.

Allowing users to remain responsible stabilises trust.

The lesson is not to remove automation.

The lesson is to design AI systems with visible boundaries.

AI can recommend.

Humans must remain responsible.

The Business Risk of Removing Control

Many AI-powered optimisation systems focus on short-term conversion metrics.

Aggressive defaults and friction reduction may increase immediate plan switches.

But in high-stakes environments, trust erosion compounds over time.

When AI recommendations feel final:

Suspicion increases

Confidence decreases

Long-term retention suffers

In subscription businesses like telco, retention is built on trust not pressure.

The Design Principle

In high-stakes AI systems:

Delegation without visible override erodes trust faster than poor recommendations.

Control is not friction. Control is reassurance.

Sometimes, the most intelligent thing a system can say is:

“You can say no.”

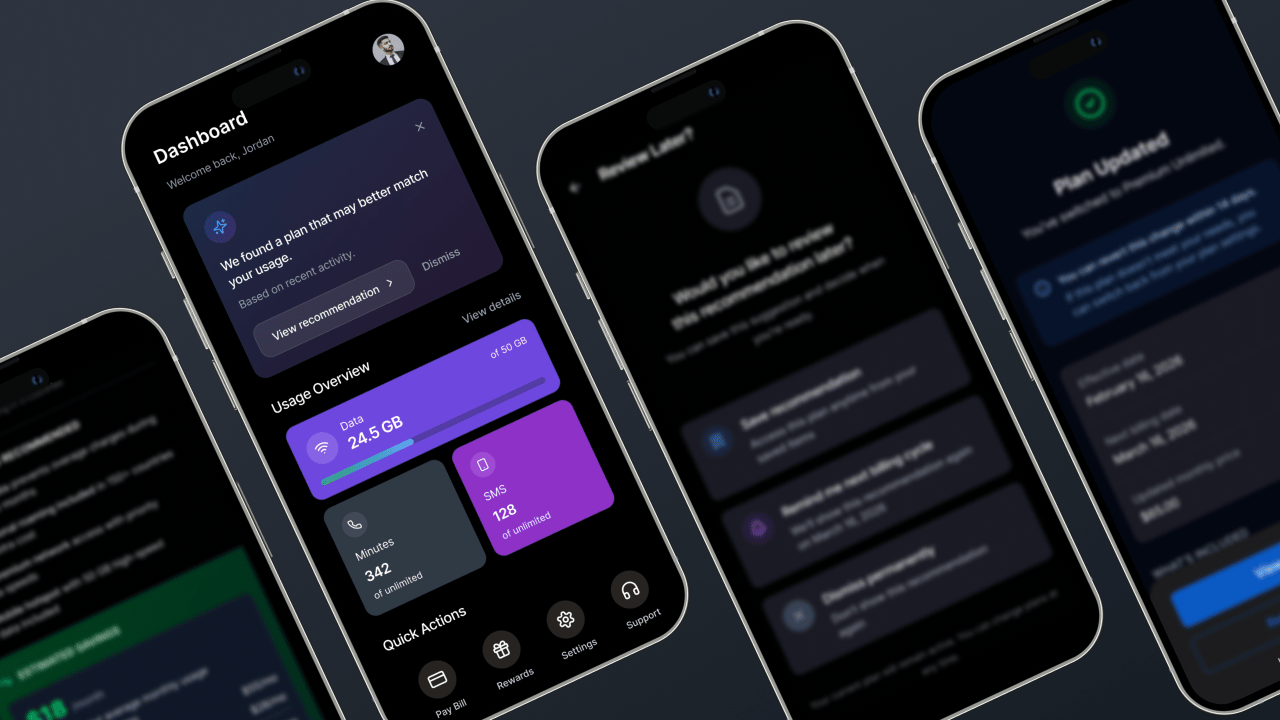

Beyond the Dashboard Card

This recommendation card is only the first boundary.

The deeper design questions emerge after Jordan taps “View recommendation”:

How much explanation is enough?

Where should hesitation be detected?

When should reversibility be introduced?

What trade-offs exist between conversion and long-term trust?

In the full Pattern Report, I break down:

The complete 4-screen AI plan recommendation flow

Behavioural traps such as automation bias and default bias

Real-world performance metrics from telco and regulated industries

A production-ready prototype walkthrough

If you’re designing AI systems that influence money, contracts, or risk, these details matter.